Google Core Update, Crawl Limits, and Gemini Traffic Trends Reshape Search Landscape

Google rolls out the March 2026 core update, details Googlebot crawl limits, and sees Gemini AI referral traffic surge, signaling changes in search and indexing.

Google has begun rolling out its March 2026 core update while simultaneously publishing new technical details about how its crawling systems work and seeing increased referral traffic from its AI product, Gemini. Together, these developments reflect changes in how content is ranked, processed, and discovered across Google’s ecosystem.

March 2026 Core Update Begins Rolling Out

Google confirmed last week that it has started deploying its first broad core update of 2026. The rollout is expected to take up to two weeks to complete.

According to Google, the update is a routine adjustment intended to improve how its systems surface relevant and useful content across different types of websites. The rollout began shortly after the completion of a separate March spam update, which concluded in less than a day.

This marks the first time since late December 2025 that Google Search rankings have undergone a broad recalibration. A February update earlier this year applied only to Discover and did not affect general search rankings.

Timing and Expected Impact

Ranking volatility is expected throughout early April as the update progresses. Google advises site owners to wait until at least one week after the rollout completes before evaluating performance changes in Search Console. Comparisons should be made against a baseline period before March 27.

Google Search Relations team member John Mueller addressed questions about overlap between the spam and core updates, stating that the two serve different purposes. He indicated that uncertainty about whether a site qualifies as spam may itself be a signal of potential issues.

Mueller also noted that core updates are not deployed as a single system-wide change. Instead, they involve multiple systems and teams, resulting in staggered rollouts and ranking fluctuations that can occur in waves.

Industry observers, including Roger Montti, have suggested that the proximity of the spam update to the core update may reflect a broader effort to reassess content quality.

Google Details Googlebot Architecture and Crawl Limits

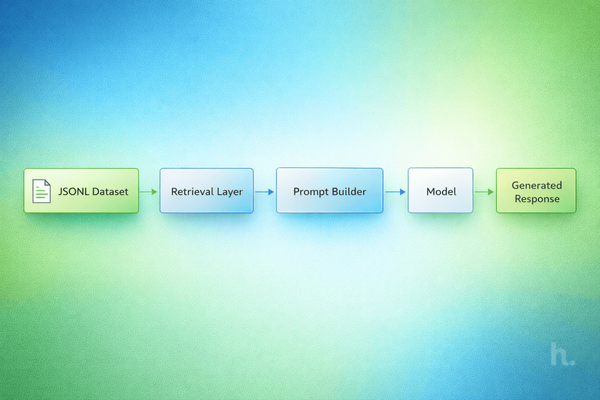

In parallel with the core update, Google published new technical information about how its crawling systems operate.

Gary Illyes explained that Googlebot functions as one client within a centralized crawling infrastructure used across multiple Google services, including Shopping and AdSense. Each service accesses the system with its own configuration and crawler identity.

The 2 MB Crawl Limit

Google recently introduced a 2 MB fetch limit for Googlebot in Search. Illyes clarified that this limit includes HTTP response headers and applies specifically to Search crawling. Other Google systems may operate under a broader default limit of 15 MB.

When a page exceeds the 2 MB threshold, Googlebot does not reject it. Instead, it stops fetching additional data and forwards the truncated content for indexing. Any content beyond that limit is not processed or indexed.

This behavior has practical implications for sites with large inline elements such as base64-encoded images, embedded CSS or JavaScript, or oversized navigation structures. External resources, however, are counted separately and do not contribute to the same byte limit.

Illyes noted that the 2 MB threshold is not fixed and may change over time as web standards evolve.

Industry Response

Cyrus Shepard commented that while most pages are unlikely to hit the limit, unusually large pages may experience indexing gaps if critical content falls beyond the first 2 MB.

Page Size Growth Raises Indexing Considerations

Additional context on page size and crawling emerged from a recent episode of Google’s Search Off the Record podcast, featuring Illyes and Martin Splitt.

They noted that average web page sizes have nearly tripled over the past decade. Data from the 2025 Web Almanac places the median mobile homepage at approximately 2.3 MB, approaching Googlebot’s current fetch limit.

Illyes raised the possibility that structured data, which Google encourages for enhanced search features, may be contributing to increased page weight. While structured data can improve visibility in search results, it also adds to the total size of a page.

Splitt indicated that Google plans to provide guidance on reducing page weight in future discussions. In the meantime, developers may need to ensure that essential content appears early in the HTML response to avoid being excluded during truncated crawls.

Gemini Referral Traffic Surges

New third-party data suggests that Google’s AI platform, Google Gemini, is becoming a more significant source of referral traffic.

According to analysis from SE Ranking, Gemini’s referral traffic to websites increased by 115 percent between November 2025 and January 2026. The growth coincides with the rollout of Gemini 3.

By January, Gemini was generating more referral traffic than Perplexity AI, exceeding it by 29 percent globally and 41 percent in the United States.

Despite this growth, ChatGPT remains the dominant source of AI-driven referral traffic, accounting for roughly 80 percent of the total. However, its lead has narrowed significantly, with the gap between ChatGPT and Gemini shrinking from approximately 22 times in October to about 8 times in January.

Scale and Context

AI-driven referrals remain a small portion of overall internet traffic. Combined, AI platforms account for approximately 0.24 percent of global traffic, up from 0.15 percent in 2025.

While Gemini’s growth is notable, the data covers only a two-month period and may reflect short-term effects tied to product updates rather than a sustained long-term trend.

Increased Transparency Around Google Systems

A notable pattern across this week’s developments is Google’s increased communication about how its systems operate.

The company has published new documentation on crawl limits, discussed infrastructure decisions in podcasts, and clarified aspects of core update deployment through public statements. These disclosures provide additional context beyond formal documentation and offer insight into how ranking and crawling systems function in practice.

At the same time, Google has not provided comparable detail about how traffic flows through its AI products, even as referral volumes increase.

Conclusion

The March 2026 core update, new disclosures about Googlebot’s architecture, and rising AI referral traffic collectively point to a shifting search environment.

Ranking systems continue to evolve through periodic updates, while technical constraints such as crawl limits are becoming more clearly defined. Meanwhile, AI platforms are beginning to play a measurable, though still limited, role in directing traffic across the web.

For publishers and developers, these changes highlight the importance of monitoring both ranking performance and technical factors such as page size, while also tracking emerging traffic sources beyond traditional search.